Im Testing Center von Microsoft Test Manager findet sich der Tab ‚Organize‘ unter dem sich vier Sub-Tabs finden.

Obwohl man sich laut den Breadcrumps in einem bestimmten Testplan befindet, werden in den vier Sub-Tabs per default alle Entitäten (Test Pläne, Test Konfigurationen, Testfälle, Shared Steps) innerhalb des TFS Team Projekts angezeigt, was einigermaßen verwirrend ist.

Grundsätzlich kann man sagen, dass die Spaltenbenutzung nicht ganz so intuitiv ist, wie man es sich wünschen würde:

- Zu den sichtbaren Spalten kann man mit einem `Geheimtrick` noch weitere hinzufügen. Rechtsklick auf die Spaltenüberschriften-Leiste und zahlreiche zusätzliche Spalten können zum Anzeigen ausgewählt werden.

- Auch kann man die Spalten aufsteigend/absteigend sortieren, indem man ein bzw. zwei Mal auf die entsprechende Spaltenüberschrift klickt.

- Einige Spalten kann man noch filtern. Wenn man mit der Maus über die rechte Ecke fliegt, erscheint aus dem Nichts ein Pfeil. Bleibt er grau, kann man nicht nach den Spaltenwerten filtern – wird er schwarz, kann man es.

Was noch sinnvoll gewesen wäre, wenn man die Tabs schon ‚Manager‘ nennt, wäre noch ein Zähler aller aufgeführten Entitäten in den einzelnen Sub-Tabs.

Test Plan Manager

In diesem Tab finden sich alle Testpläne eines TFS Teamprojekts aufgelistet. Eine Besonderheit stellt noch der Button ‚Edit run settings‘ dar, wohinter man die Datensammlung beim Testen erweitern kann – nähere Erläuterungen: hier.

Test Configuration Manager

Was es mit den Testkonfigurationen auf sich hat, kann man am besten an konkreten Beispielen verdeutlichen. Eine Konfigurationsvariable für einen Test ist beispielsweise das Betriebssystem – die einzelnen Werte der Konfigurationsvariable können also beispielsweise Windows, Linux oder MacOS sein. Eine weitere Konfigurationsvariable für das Testen von Webanwendungen ist beispielsweise der Browser. Diese Konfigurationsvariable kann die Werte Firefox, Chrome, Internet Explorer, Safari oder Opera annehmen. Eine Konfiguration ist eine mögliche Kombination aus den Werten der Konfigurationsvariablen, z.B. (Windows, Firefox). Legt der Testmanager also diese Konfiguration für den kommenden Testdurchlauf fest, dann teste ich den Testplan gegen die Anwendung auf Firefox auf einem Windows-Rechner. Genau das kann man im Sub-Tab Test Configuration Manager einstellen.

Test Case Manager

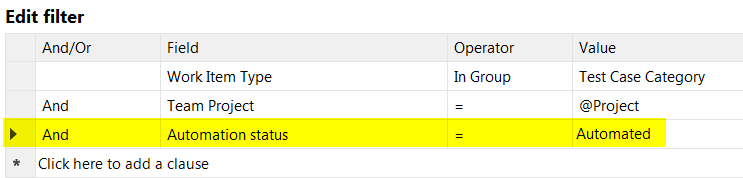

Bei einer Testsuite mit sehr vielen Testfällen friert MTM erstmal für ein paar Sekunden ein, bevor man weiterarbeiten kann. Man kann die Auswahl der Testfälle nun einschränken, indem man sich der üblichen Spaltenfilter oder eines erweiterten Filters bedient; letzteres indem man auf ‚Unfiltered‘ klickt. Jetzt kommt die nächste kleine Verwirrung, denn obwohl das Dropdown-Menü ‚Unfiltered‘ angezeigt hat, erscheint ein Filter, den man nun editieren kann. Z.B. kann man diesen default-Filter nun weiter einschränken, indem man noch eine weitere Teilbedingung hinzufügt, z.B. zur Ausgabe ausschließlich automatisierter Testfälle:

Mittels Run-Query kann die vorläufige Auswahl ermittelt werden und mittels ‚Save query‘ wird die Auswahl schließlich gespeichert. Ob diese nette Funktion ausreicht, um den Tab mit dem Begriff ‚Manager‘ zu adeln sei einmal dahingestellt.

Shared Steps Manager

Shared Steps sind zusammengefasste Testschritte, die in einem Testfall wiederverwendet werden können (das gern genommene Standardbeispiel ist ja der Login). Der Sub-Tab Shared Step Manager funktioniert analog zu dem Sub-Tab Test Case Manager. Zusätzlich gibt’s noch den Button “Create action recording“ – hier steht, was es damit auf sich hat. Warum es einen solchen im Shared Steps Manager gibt, nicht jedoch einen analogen im Test Case Manager bleibt wohl für immer das Geheimnis der Erbauer dieses Tools.